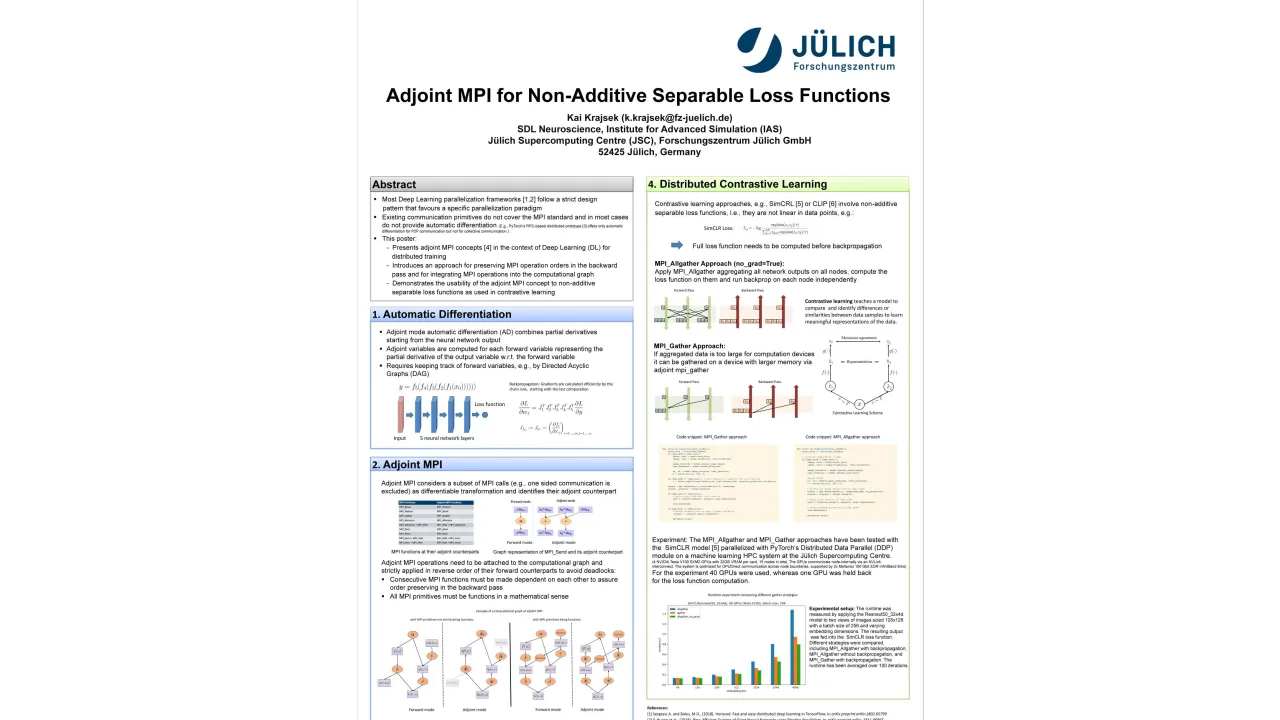

Adjoint MPI for Non-Additive Separable Loss Functions

Monday, May 22, 2023 3:00 PM to Wednesday, May 24, 2023 5:00 PM · 2 days 2 hr. (Europe/Berlin)

Foyer D-G - 2nd Floor

Research Poster

AI ApplicationsML Systems and ToolsNumerical LibrariesParallel Programming Languages

Information

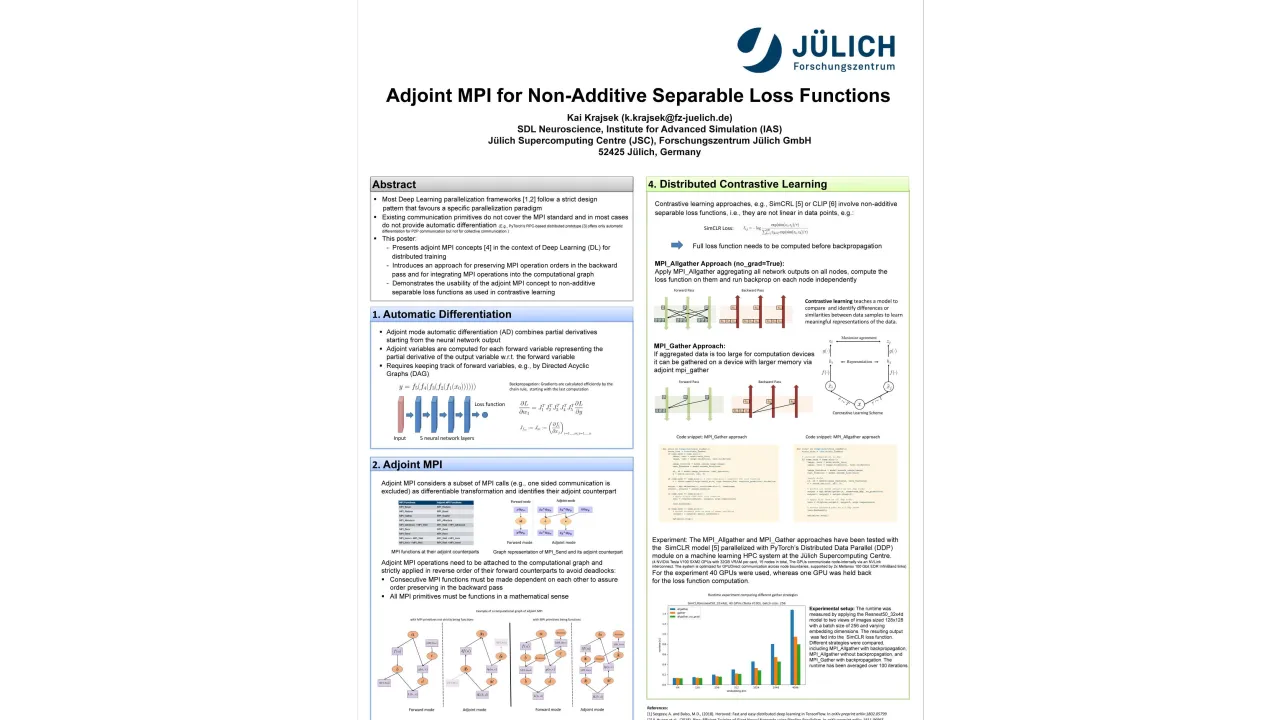

Most contemporary frameworks for parallelizing deep learning models follow a fixed design pattern that favours a specific parallelization paradigm. More flexible libraries, such as PyTorch.distributed provides communication primitives but lack the flexibility of the capabilities of the MPI standard.

PyTorch.distributed currently supports automatic differentiation for point-to-point communication, but not for collective communication.

In contrast, flexible and effective communication patterns are useful for effective non-additive separable loss functions encountered in self-supervised contrastive learning approaches. This poster explores the implementation of the adjoint MPI concept in distributed deep learning models. It begins with an introduction to the fundamental principles of adjoint modelling, followed by an adjoint MPI concept for distributed Deep Learning which enables flexible parallelization in conjunction with existing libraries. The poster also covers implementation details and usage of the approach in contrastive self-supervised learning.

Format

On-site

Beginner Level

20%

Intermediate Level

60%

Advanced Level

20%